Robots that dance, but can’t pour juice: Why the hype around AI machines falls short

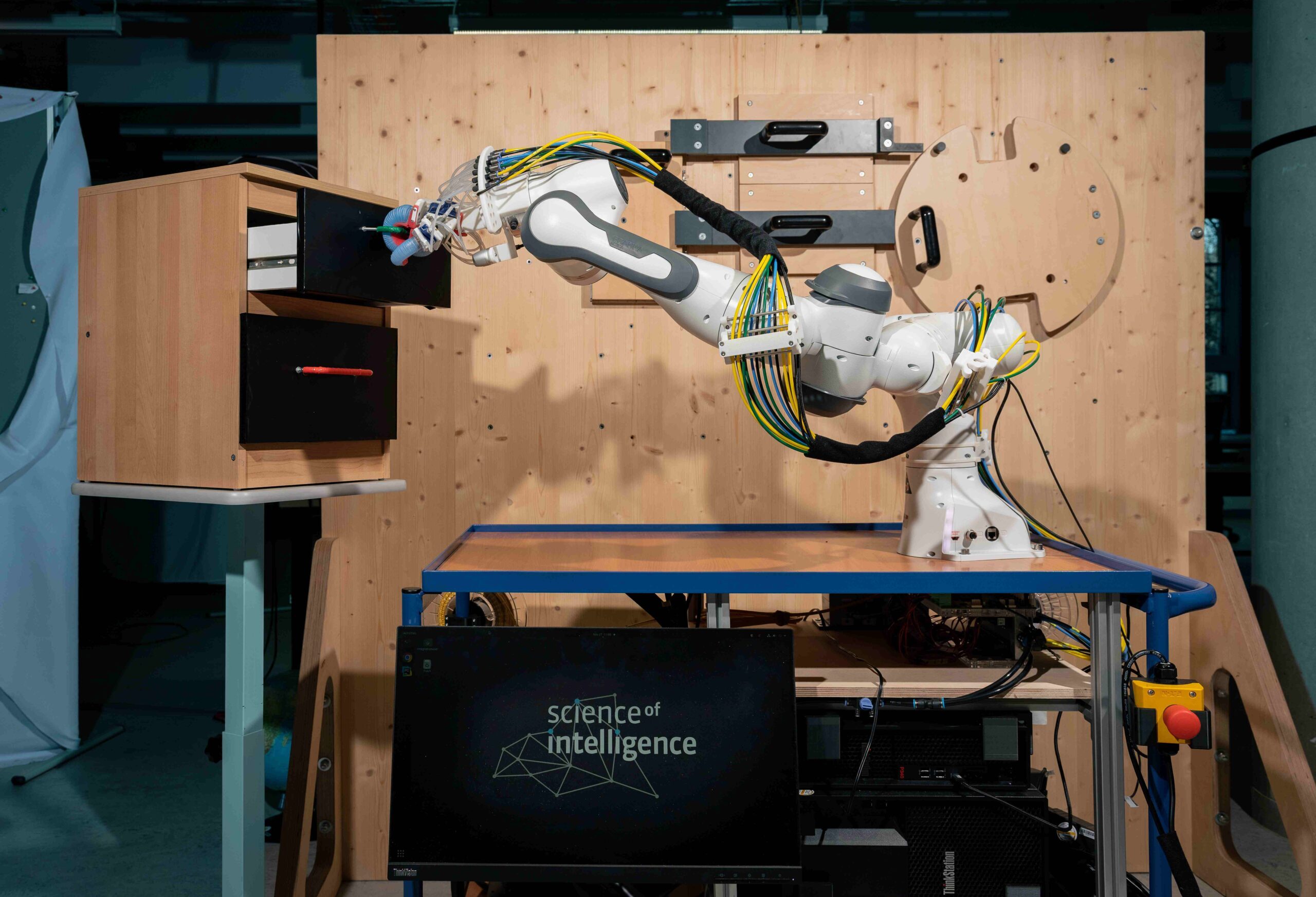

Roboticist and SCIoI Spokesperson Oliver Brock in an interview with Rundfunk Berlin-Brandenburg (rbb).

They flip, they fight, and they dance with uncanny precision. Humanoid robots performing kung fu routines or backflips have become a staple of today’s tech coverage, fueling both awe and concern and a sense that we are soon going to live in a world not so different to science fiction. But according to Oliver Brock, one of Germany’s leading roboticists, that impression is misleading. In a recent interview with Wissenswerte, a program by German national broadcaster Rundfunk Berlin-Brandenburg, he offers a counter position to the viral robot videos currently dominating headlines, explaining what today’s machines can actually do, and where the public conversation is getting it wrong.

Listen to the interview (German) on rbb inforadio.

“The first reaction is the same for everyone: wow,” Oliver says. “But once you look closer, you realize nothing fundamentally new has happened.”

Behind the spectacle, he argues, lies a much more modest reality. The robots dazzling audiences online are typically executing highly controlled, pre-programmed movements. They may look fluid and lifelike, but they do not understand what they are doing. More crucially even, they are not interacting meaningfully with the world around them.

This disconnect points to a broader problem in how robotics is portrayed in the media. Human-like motion and anthropomorphism triggers a powerful cognitive bias: we instinctively assume that something that moves like us and looks like us must also think like us. That assumption, Oliver stresses, is wrong.

In fact, the real challenge in robotics is not movement but understanding. Tasks that appear trivial to humans, such as finding a glass, opening a bottle, or navigating a cluttered kitchen, remain enormously difficult for machines. While a robot can be trained to perform a perfect backflip, it will still struggle to pour a glass of juice in an unfamiliar environment.

This paradox has been known for decades. In the 1980s, researchers formulated what is now called Moravec’s Paradox: tasks that are hard for humans, like playing chess or processing language, are relatively easy for computers. But the things humans do effortlessly, like perception, coordination, and common sense, are extraordinarily hard to replicate in machines.

That insight is especially relevant today, as advances in artificial intelligence, particularly in language models, dominate headlines. Systems that can generate fluent text are often perceived as intelligent. But Oliver warns against equating language with understanding. These systems, he notes, are fundamentally statistical; they predict likely sequences of words rather than grasping meaning in a human sense.

The real frontier lies in enabling machines to deal with the messy, unpredictable nature of the real world.