SCIoI researcher Runfeng Qu presents new computer-vision work at WACV 2026

Runfeng Qu, a doctoral researcher at SCIoI, will present his latest paper at the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV) 2026, taking place 6–10 March 2026 in Tucson, Arizona. The conference is widely regarded as one of the leading international venues for applied computer vision, bringing together academic and industry researchers working on how machines interpret visual scenes in settings that resemble real-world use rather than simplified lab examples.

Runfengs research connects to this quite directly. At SCIoI, he works on how humans visually explore environments: where we look, how attention shifts depending on a task, and what information we retain. Alongside that, he develops computational models that try to capture similar mechanisms for artificial vision systems. The paper he is presenting comes from this modelling work.

When AI misreads what’s happening in an image

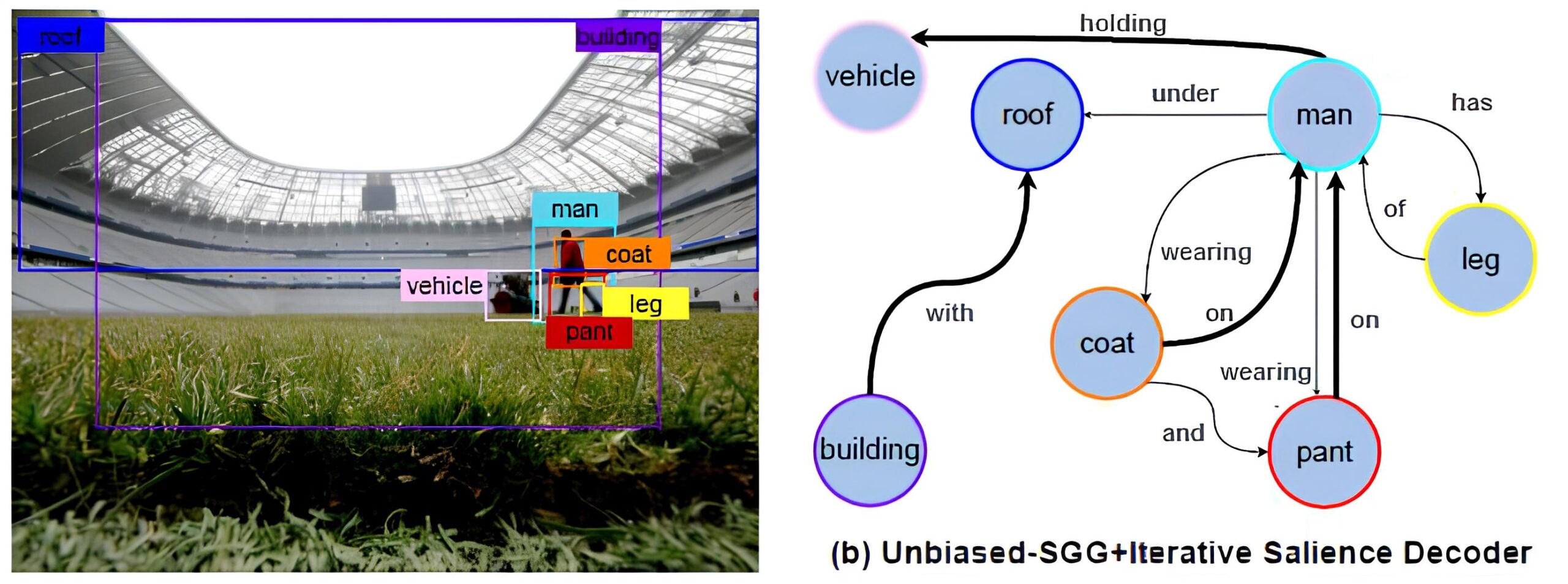

His paper, “Salience-SGG: Enhancing Unbiased Scene Graph Generation with Iterative Salience Estimation,” focuses on scene graph generation (SGG). These are models that identify images not just by detecting objects but by describing how these objects relate to each other, for example “person holding cup” or “bike leaning against wall.” That relational understanding matters in robotics, image search, and AI systems that combine vision with language.

A well-known issue in SGG is dataset imbalance. Training data contains many examples of common relationships but far fewer rare ones. Methods designed to correct this imbalance sometimes overshoot: the model starts predicting unusual relationships because they are statistically emphasized, even when the spatial evidence in the image does not really support them. A person standing next to a beach chair, for example, might incorrectly be labelled as sitting on it.

Runfeng’s contribution addresses this by adding a step that explicitly evaluates how strongly two objects are connected in the image based on their spatial arrangement. In practice, this means the model repeatedly checks whether object pairs actually form a visually plausible configuration, for example, whether positions, distances, and overlaps match a realistic interaction, before settling on a predicted relationship. This reduces the tendency to guess relationships purely from statistical frequency.

Links to earlier SCIoI perception research

The work builds on earlier SCIoI projects that examined how people explore visual environments with eye movements. These studies suggested that object-level representations help connect low-level visual salience with goal-directed attention. Runfeng’s modelling work explores how comparable principles can improve artificial visual systems, especially when interpreting complex scenes.

Within the cluster this is sometimes described as part of the “synthetic” side of the research loop: findings about human perception feed into computational models, which are then tested in artificial systems.

From human perception to AI vision

The project reflects a question that comes up often in the cluster: how far insights about human perception can actually improve artificial vision systems. Presenting at WACV gives Runfeng a chance to test that idea in a community focused on practical computer-vision problems and to see where the approach holds up, or where it needs refinement.